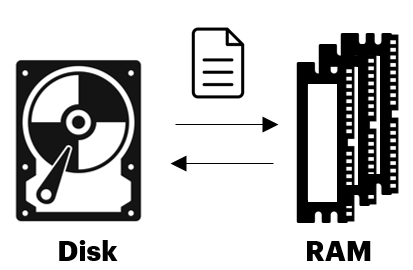

Spill problem happens when the moving of an RDD (resilient distributed dataset, aka fundamental data structure in Spark) moves from RAM to disk and then back to RAM again.

Simply put, this behavior occurs when a given data partition is too large to fit within the RAM of the executor. Spark will read and write the surplus data into disk to free up memory space in the local RAM for the remaining tasks within the job.

This is an expensive process and slow!

Simple Demonstrate of Spill Problem

In this article, we will go through all of the below topics to create a clear understanding of the Spill problem in Spark.