Recent advancements in weight quantization allow us to run massive large language models on consumer hardware, like a LLaMA-30B model on an RTX 3090 GPU. This is possible thanks to novel 4-bit quantization techniques with minimal performance degradation, like GPTQ, GGML, and NF4.

In the previous article, we introduced naïve 8-bit quantization techniques and the excellent LLM.int8(). In this article, we will explore the popular GPTQ algorithm to understand how it works and implement it using the AutoGPTQ library.

You can find the code on Google Colab and GitHub.

Optimal Brain Quantization

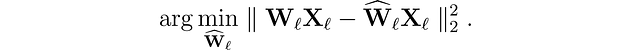

Let’s start by introducing the problem we’re trying to solve. For every layer ??? in the network, we want to find a quantized version ????? of the original weights W???. This is called the layer-wise compression problem. More specifically, to minimize performance degradation, we want the outputs (?????X???) of these new weights to be as close as possible to the original ones (W???X???). In other words, we want to find:

Different approaches have been proposed to solve this problem, but we’re interested in the Optimal Brain Quantizer (OBQ) framework here.